|

12/9/2022 0 Comments Adversarial network radar

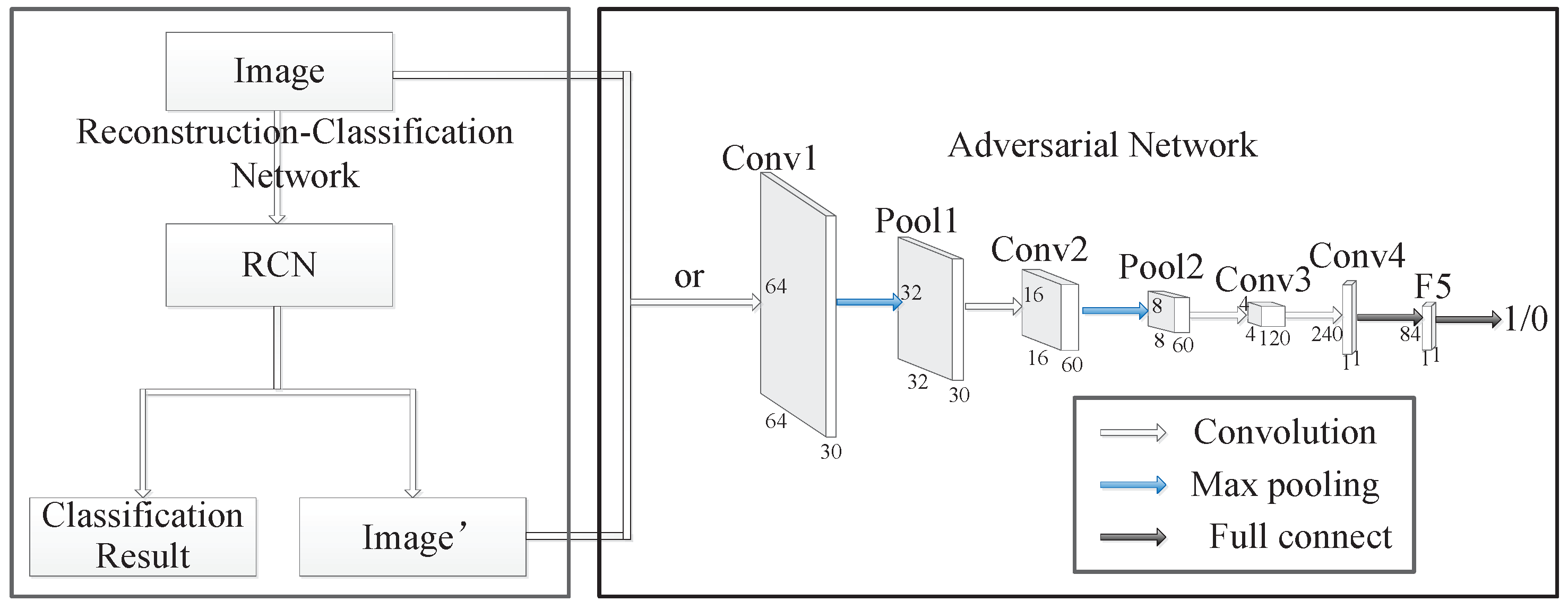

#ADVERSARIAL NETWORK RADAR GENERATOR#The generator is trained to fool the discriminator, with the intended goal being a state where the generator has learned to create samples that are representative of the underlying data distribution, and the discriminator is unsure whether it is looking at real or fake samples. In the original GAN setup, a generator network learns to map samples from a (typically low-dimensional) noise distribution into the data space, and a second network called the discriminator learns to distinguish between real data samples and fake generated samples. Modeling Documents with Generative Adversarial Networks This work is an early exploration to see if GANs can be used to learn document representations in an unsupervised setting. In addition to autoregressive models like the NADE family, there are two other popular approaches to building generative models at the moment - Variational Autoencoders and Generative Adversarial Networks (GANs). The first of these is the Replicated Softmax, which is based on the Restricted Boltzmann Machine, and then later this was also surpassed by a neural autoregressive model called DocNADE. Later neural approaches to modeling documents have been shown to outperform LDA when evaluated on small news corpus (discussed below). One of the most established techniques for learning unsupervised document representations from the literature is Latent Dirichlet Allocation (LDA). #ADVERSARIAL NETWORK RADAR HOW TO#Word (and character) embeddings have become a standard component of Deep Learning models for natural language processing, but there is less consensus around how to learn representations of sentences or entire documents. Some people also feel that we will never be able to build more generally intelligent machines using purely supervised learning (a viewpoint that is illustrated by the now infamous LeCun cake slide). In many domains there may be an abundance of unlabelled data available to us, while supervised data is difficult and/or costly to acquire. This ability to do this is desirable for several reasons. The features are typically learned by trying to predict various properties of the underlying data distribution, or by using the data to solve a separate (possibly unrelated) task for which we do have a large number of labelled examples. There are a large variety of approaches to representation learning in general, but the basic idea is to learn some set of features from data, and then using these features for some other task where you may only have a small number of labelled examples (such as classification). Representation learning has been a hot topic in recent years, in part driven by the desire to apply the impressive results of Deep Learning models on supervised tasks to the areas of unsupervised learning and transfer learning. In this post I provide a brief overview of the paper and walk through some of the code. I presented some preliminary work on using Generative Adversarial Networks to learn distributed representations of documents at the recent NIPS workshop on Adversarial Training.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed